Reputation: 199304

faster implementation of sum ( for Codility test )

How can the following simple implementation of sum be faster?

private long sum( int [] a, int begin, int end ) {

if( a == null ) {

return 0;

}

long r = 0;

for( int i = begin ; i < end ; i++ ) {

r+= a[i];

}

return r;

}

EDIT

Background is in order.

Reading latest entry on coding horror, I came to this site: http://codility.com which has this interesting programming test.

Anyway, I got 60 out of 100 in my submission, and basically ( I think ) is because this implementation of sum, because those parts where I failed are the performance parts. I'm getting TIME_OUT_ERROR's

So, I was wondering if an optimization in the algorithm is possible.

So, no built in functions or assembly would be allowed. This my be done in C, C++, C#, Java or pretty much in any other.

EDIT

As usual, mmyers was right. I did profile the code and I saw most of the time was spent on that function, but I didn't understand why. So what I did was to throw away my implementation and start with a new one.

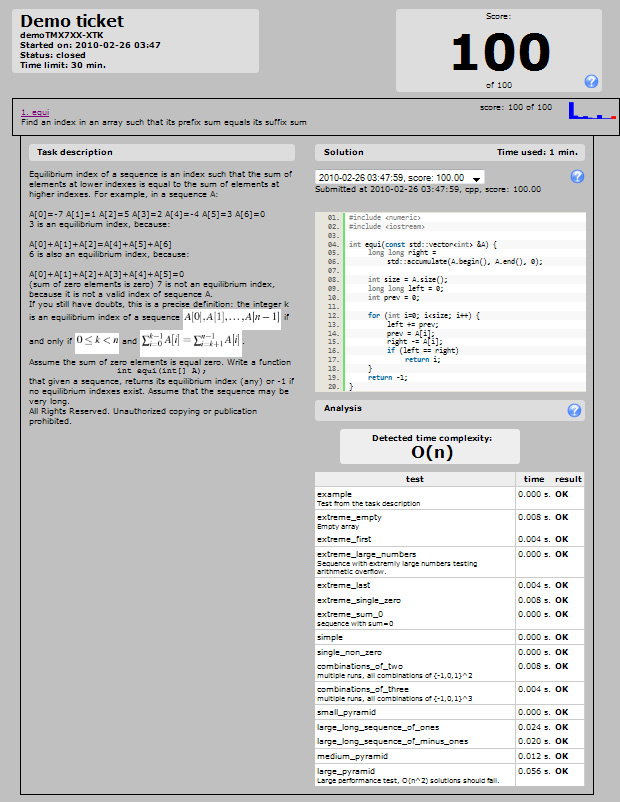

This time I've got an optimal solution [ according to San Jacinto O(n) -see comments to MSN below - ]

This time I've got 81% on Codility which I think is good enough. The problem is that I didn't take the 30 mins. but around 2 hrs. but I guess that leaves me still as a good programmer, for I could work on the problem until I found an optimal solution:

Here's my result.

I never understood what is those "combinations of..." nor how to test "extreme_first"

Upvotes: 11

Views: 30564

Answers (21)

Reputation: 284

This may be old, but here is solution in Golang with 100% pass rate:

package solution

func Solution(A []int) int {

// write your code in Go 1.4

var left int64

var right int64

var equi int

equi = -1

if len(A) == 0 {

return equi

}

left = 0

for _, el := range A {

right += int64(el)

}

for i, el := range A {

right -= int64(el)

if left == right {

equi = i

}

left += int64(el)

}

return equi

}

Upvotes: -2

Reputation: 1

Here is my answer with with explanations on how to go about it. It will get you 100%

class Solution

{

public int solution(int[] A)

{

long sumLeft = 0; //Variable to hold sum of elements to the left of the current index

long sumRight = 0; //Variable to hold sum of elements to the right of the current index

long sum = 0; //Variable to hold sum of all elements in the array

long leftHolder = 0; //Variable that holds the sum of all elements to the left of the current index, including the element accessed by the current index

//Calculate the total sum of all elements in the array and store it in the sum variable

for (int i = 0; i < A.Length; i++)

{

//sum = A.Sum();

sum += A[i];

}

for (int i = 0; i < A.Length; i++)

{

//Calculate the sum of all elements before the current element plus the current element

leftHolder += A[i];

//Get the sum of all elements to the right of the current element

sumRight = sum - leftHolder;

//Get the sum of all elements of elements to the left of the current element.We don't include the current element in this sum

sumLeft = sum - sumRight - A[i];

//if the sum of the left elements is equal to the sum of the right elements. Return the index of the current element

if (sumLeft == sumRight)

return i;

}

//Otherwise return -1

return -1;

}

}

Upvotes: -1

Reputation: 32888

This got me 100% in Javascript:

function solution(A) {

if (!(A) || !(Array.isArray(A)) || A.length < 1) {

return -1;

}

if (A.length === 1) {

return 0;

}

var sum = A.reduce(function (a, b) { return a + b; }),

lower = 0,

i,

val;

for (i = 0; i < A.length; i++) {

val = A[i];

if (((sum - lower) - val) === (lower)) {

return i;

}

lower += val;

}

return -1;

}

Upvotes: -1

Reputation: 61

Here is my solution and I scored 100%

public static int solution(int[] A)

{

double sum = A.Sum(d => (double)d);

double leftSum=0;

for (int i = 0; i < A.Length; i++){

if (leftSum == (sum-leftSum-A[i])) {

return i;

}

else {

leftSum = leftSum + A[i];

}

}

return -1;

}

Upvotes: 6

Reputation: 17

100% correctness and performance of this code is tested

Private Function equi(ByVal A() As Integer) As Integer

Dim index As Integer = -1

If A.Length > 0 And Not IsDBNull(A) Then

Dim sumLeft As Long = 0

Dim sumRight As Long = ArraySum(A)

For i As Integer = 0 To A.Length - 1

Dim val As Integer = A(i)

sumRight -= val

If sumLeft = sumRight Then

index = i

End If

sumLeft += val

Next

End If

Return index

End Function

Upvotes: 0

Reputation: 1

private static int equi ( int[] A ) {

if (A == null || A.length == 0)

return -1;

long tot = 0;

int len = A.length;

for(int i=0;i<len;i++)

tot += A[i];

if(tot == 0)

return (len-1);

long partTot = 0;

for(int i=0;i<len-1;i++)

{

partTot += A[i];

if(partTot*2+A[i+1] == tot)

return i+1;

}

return -1;

}

I considered the array as a bilance so if the equilibrium index exist then half of the weight is on the left. So I only compare the partTot (partial total) x 2 with the total weight of the array. the Alg takes O(n) + O(n)

Upvotes: 0

Reputation: 77

Here is a thought:

private static ArrayList equi(int[] A)

{

ArrayList answer = new ArrayList();

//if(A == null) return -1;

if ((answer.Count == null))

{

answer.Add(-1);

return answer;

}

long sum0 = 0, sum1 = 0;

for (int i = 0; i < A.Length; i++) sum0 += A[i];

for (int i = 0; i < A.Length; i++)

{

sum0 -= A[i];

if (i > 0) { sum1 += A[i - 1]; }

if (sum1 == sum0) answer.Add(i);

//return i;

}

//return -1;

return answer;

}

Upvotes: 1

Reputation: 10350

100% O(n) solution in C

int equi ( int A[], int n ) {

long long sumLeft = 0;

long long sumRight = 0;

int i;

if (n <= 0) return -1;

for (i = 1; i < n; i++)

sumRight += A[i];

i = 0;

do {

if (sumLeft == sumRight)

return i;

sumLeft += A[i];

if ((i+1) < n)

sumRight -= A[i+1];

i++;

} while (i < n);

return -1;

}

Probably not perfect but it passes their tests anyway :)

Can't say I'm a big fan of Codility though - it is an interesting idea, but I found the requirements a little too vague. I think I'd be more impressed if they gave you requirements + a suite of unit tests that test those requirements and then asked you to write code. That's how most TDD happens anyway. I don't think doing it blind really gains anything other than allowing them to throw in some corner cases.

Upvotes: 0

Reputation: 9

{In Pascal + Assembly}

{$ASMMODE INTEL}

function equi (A : Array of longint; n : longint ) : longint;

var c:Longint;

label noOverflow1;

label noOverflow2;

label ciclo;

label fine;

label Over;

label tot;

Begin

Asm

DEC n

JS over

XOR ECX, ECX {Somma1}

XOR EDI, EDI {Somma2}

XOR EAX, EAX

MOV c, EDI

MOV ESI, n

tot:

MOV EDX, A

MOV EDX, [EDX+ESI*4]

PUSH EDX

ADD ECX, EDX

JNO nooverflow1

ADD c, ECX

nooverflow1:

DEC ESI

JNS tot;

SUB ECX, c

SUB EDI, c

ciclo:

POP EDX

SUB ECX, EDX

CMP ECX, EDI

JE fine

ADD EDI, EDX

JNO nooverflow2

DEC EDI

nooverflow2:

CMP EAX, n

JA over

INC EAX

JMP ciclo

over:

MOV EAX, -1

fine:

end;

End;

Upvotes: -1

Reputation: 1915

I don't think your problem is with the function that's summing the array, it's probably that you're summing the array WAY to frequently. If you simply sum the WHOLE array once, and then step through the array until you find the first equilibrium point you should decrease the execution time sufficiently.

int equi ( int[] A ) {

int equi = -1;

long lower = 0;

long upper = 0;

foreach (int i in A)

upper += i;

for (int i = 0; i < A.Length; i++)

{

upper -= A[i];

if (upper == lower)

{

equi = i;

break;

}

else

lower += A[i];

}

return equi;

}

Upvotes: 7

Reputation: 1022

This won't help you with an O(n^2) algorithm, but you can optimize your sum.

At a previous company, we had Intel come by and give us optimization tips. They had one non-obvious and somewhat cool trick. Replace:

long r = 0;

for( int i = begin ; i < end ; i++ ) {

r+= a[i];

}

with

long r1 = 0, r2 = 0, r3 = 0, r4 = 0;

for( int i = begin ; i < end ; i+=4 ) {

r1+= a[i];

r2+= a[i + 1];

r3+= a[i + 2];

r4+= a[i + 3];

}

long r = r1 + r2 + r3 + r4;

// Note: need to be clever if array isn't divisible by 4

Why this is faster: In the original implementation, your variable r is a bottleneck. Every time through the loop, you have to pull data from memory array a (which takes a couple cycles), but you can't do multiple pulls in parallel, because the value of r in the next iteration of the loop depends on the value of r in this iteration of the loop. In the second version, r1, r2, r3, and r4 are independent, so the processor can hyperthread their execution. Only at the very end do they come together.

Upvotes: 1

Reputation: 490518

This code is simple enough that unless a is quite small, it's probably going to be limited primarily by memory bandwidth. As such, you probably can't hope for any significant gain by working on the summing part itself (e.g., unrolling the loop, counting down instead of up, executing sums in parallel -- unless they're on separate CPUs, each with its own access to memory). The biggest gain will probably come from issuing some preload instructions so most of the data will already be in the cache by the time you need it. The rest will just (at best) get the CPU to hurry up more, so it waits longer.

Edit: It appears that most of what's above has little to do with the real question. It's kind of small, so it may be difficult to read, but, I tried just using std::accumulate() for the initial addition, and it seemed to think that was all right:

Upvotes: 5

Reputation: 32243

I did the same naive implementation and here's my O(n) solution. I did not use the IEnumerable Sum method because it was not available at Codility. My solution still doesn't check for overflow in case the input has large numbers so it's failing that particular test on Codility.

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

namespace ConsoleApplication2

{

class Program

{

static void Main(string[] args)

{

var list = new[] {-7, 1, 5, 2, -4, 3, 0};

Console.WriteLine(equi(list));

Console.ReadLine();

}

static int equi(int[] A)

{

if (A == null || A.Length == 0)

return -1;

if (A.Length == 1)

return 0;

var upperBoundSum = GetTotal(A);

var lowerBoundSum = 0;

for (var i = 0; i < A.Length; i++)

{

lowerBoundSum += (i - 1) >= 0 ? A[i - 1] : 0;

upperBoundSum -= A[i];

if (lowerBoundSum == upperBoundSum)

return i;

}

return -1;

}

private static int GetTotal(int[] ints)

{

var sum = 0;

for(var i=0; i < ints.Length; i++)

sum += ints[i];

return sum;

}

}

}

Upvotes: 0

Reputation: 660435

Some tips:

Use a profiler to identify where you're spending a lot of time.

Write good performance tests so that you can tell the exact effect of every single change you make. Keep careful notes.

If it turns out that the bottleneck is the checks to ensure that you're dereferencing a legal address inside the array, and you can guarantee that begin and end are in fact both inside the array, then consider fixing the array, making a pointer to the array, and doing the algorithm in pointers rather than arrays. Pointers are unsafe; they do not spend any time checking to make sure you're still inside the array, so therefore they can be somewhat faster. But you take responsibility then for ensuring that you do not corrupt every byte of memory in the address space.

Upvotes: 4

Reputation: 3647

In C# 3.0, my computer and my OS this is faster as long as you can guarantee that 4 consecutive numbers won't overflow the range of an int, probably because most additions are done using 32-bit math. However using a better algorithm usually provides higher speed up than any micro-optimization.

Time for a 100 millon elements array:

4999912596452418 -> 233ms (sum)

4999912596452418 -> 126ms (sum2)

private static long sum2(int[] a, int begin, int end)

{

if (a == null) { return 0; }

long r = 0;

int i = begin;

for (; i < end - 3; i+=4)

{

//int t = ;

r += a[i] + a[i + 1] + a[i + 2] + a[i + 3];

}

for (; i < end; i++) { r += a[i]; }

return r;

}

Upvotes: 1

Reputation: 6465

Just some thought, not sure if accessing the pointer directly be faster

int* pStart = a + begin;

int* pEnd = a + end;

while (pStart != pEnd)

{

r += *pStart++;

}

Upvotes: -1

Reputation: 54634

If this is based on the actual sample problem, your issue isn't the sum. Your issue is how you calculate the equilibrium index. A naive implementation is O(n^2). An optimal solution is much much better.

Upvotes: 4

Reputation: 35594

In C++, the following:

int* a1 = a + begin;

for( int i = end - begin - 1; i >= 0 ; i-- )

{

r+= a1[i];

}

might be faster. The advantage is that we compare against zero in the loop.

Of course, with a really good optimizer there should be no difference at all.

Another possibility would be

int* a2 = a + end - 1;

for( int i = -(end - begin - 1); i <= 0 ; i++ )

{

r+= a2[i];

}

here we traversing the items in the same order, just not comparing to end.

Upvotes: 0

Reputation: 10074

Probably the fastest you could get would be to have your int array 16-byte aligned, stream 32 bytes into two __m128i variables (VC++) and call _mm_add_epi32 (again, a VC++ intrinsic) on the chunks. Reuse one of the chunks to keep adding into it and on the final chunk extract your four ints and add them the old fashioned way.

The bigger question is why simple addition is a worthy candidate for optimization.

Edit: I see it's mostly an academic exercise. Perhaps I'll give it a go tomorrow and post some results...

Upvotes: 2

Reputation: 28288

If you are using C or C++ and develop for modern desktop systems and are willing to learn some assembler or learn about GCC intrinsics, you could use SIMD instructions.

This library is an example of what is possible for float and double arrays, similar results should be possible for integer arithmetic since SSE has integer instructions as well.

Upvotes: 0

Reputation: 25542

I don't believe the problem is in the code you provided, but somehow the bigger solution must be suboptimal. This code looks good for calculating the sum of one slice of the array, but maybe it's not what you need to solve the whole problem.

Upvotes: 3

Related Questions

- Slow Sums Algorithm

- Looking for a faster way to sum arrays in C#

- Optimize summation code

- Subset Sum algorithm efficiency

- C# How to optimize a simple sum?

- How to improve the algorithm sum?

- More efficient way to build sum than for loop

- algorithm performance c#

- Create an efficient way to sum

- Accumulating sum in one line in C#