Reputation: 2805

In CUDA, what is memory coalescing, and how is it achieved?

What is "coalesced" in CUDA global memory transaction? I couldn't understand even after going through my CUDA guide. How to do it? In CUDA programming guide matrix example, accessing the matrix row by row is called "coalesced" or col.. by col.. is called coalesced? Which is correct and why?

Upvotes: 112

Views: 82890

Answers (5)

Reputation: 322

The behavior of global memory accesses is clearly explained in the CUDA C Best Practices Guide:

... the concurrent accesses of the threads of a warp will coalesce into a number of transactions equal to the number of 32-byte transactions necessary to service all of the threads of the warp.

The unit of data loads in the GPU is the 32-byte-aligned sector. So the GPU looks at the union of all the data requested by the threads of a warp with a single global memory load instruction and uses as many 32-byte-aligned transactions as it takes to load it. If the request can be broken down exactly into 32-byte-aligned chunks, then there will not be excess transactions. If the request has ragged edges that fall outside 32-byte boundaries, then there will be excess transactions.

The 32-byte sectors need not be contiguous in memory. Requesting 128 bytes would yield four 32-byte transactions whether it is one 128-byte-aligned buffer or four different 32-byte-aligned sectors.

Individual threads can request 4, 8, or 16 bytes at a time, therefore the 32 threads in a warp can request 512 bytes with a single instruction, but the request is still broken up into 32-byte transactions.

These rules apply to NVIDIA compute capability 6.0 and newer, that is, any GPU released since the P100 in 2016. The previous answers to this question predate that.

There is more to say about how the memory system works on NVIDIA GPUs, including how the sectors of a request are packaged into one or more "wavefronts" which are the units that the memory pipeline can process, and the granularity of device memory to L2 cache transfers (64 bytes, half of a 128-byte cache line). For those details and more, see this excellent presentation.

Upvotes: 3

Reputation: 498

Memory coalescing is a technique which allows optimal usage of the global memory bandwidth. That is, when parallel threads running the same instruction access to consecutive locations in the global memory, the most favorable access pattern is achieved.

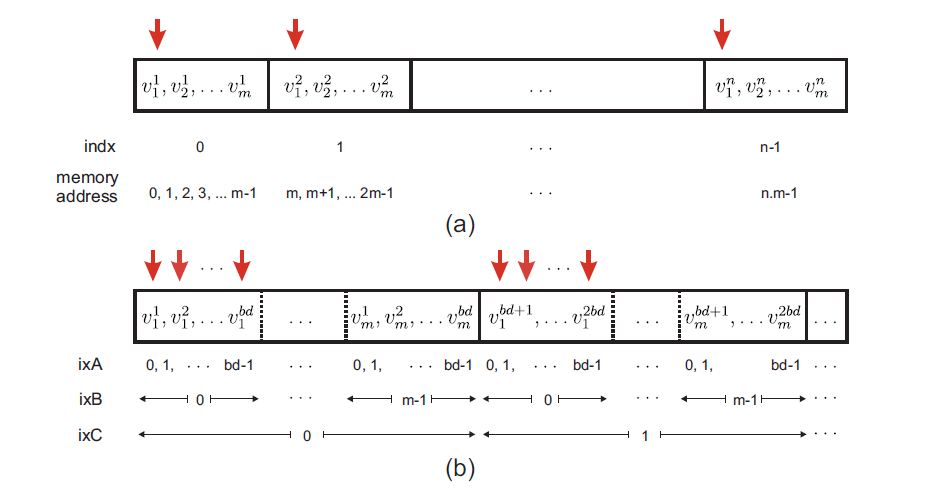

The example in Figure above helps explain the coalesced arrangement:

In Fig. (a), n vectors of length m are stored in a linear fashion. Element i of vector j is denoted by v j i. Each thread in GPU kernel is assigned to one m-length vector. Threads in CUDA are grouped in an array of blocks and every thread in GPU has a unique id which can be defined as indx=bd*bx+tx, where bd represents block dimension, bx denotes the block index and tx is the thread index in each block.

Vertical arrows demonstrate the case that parallel threads access to the first components of each vector, i.e. addresses 0, m, 2m... of the memory. As shown in Fig. (a), in this case the memory access is not consecutive. By zeroing the gap between these addresses (red arrows shown in figure above), the memory access becomes coalesced.

However, the problem gets slightly tricky here, since the allowed size of residing threads per GPU block is limited to bd. Therefore coalesced data arrangement can be done by storing the first elements of the first bd vectors in consecutive order, followed by first elements of the second bd vectors and so on. The rest of vectors elements are stored in a similar fashion, as shown in Fig. (b). If n (number of vectors) is not a factor of bd, it is needed to pad the remaining data in the last block with some trivial value, e.g. 0.

In the linear data storage in Fig. (a), component i (0 ≤ i < m) of vector indx

(0 ≤ indx < n) is addressed by m × indx +i; the same component in the coalesced

storage pattern in Fig. (b) is addressed as

(m × bd) ixC + bd × ixB + ixA,

where ixC = floor[(m.indx + j )/(m.bd)]= bx, ixB = j and ixA = mod(indx,bd) = tx.

In summary, in the example of storing a number of vectors with size m, linear indexing is mapped to coalesced indexing according to:

m.indx +i −→ m.bd.bx +i .bd +tx

This data rearrangement can lead to a significant higher memory bandwidth of GPU global memory.

source: "GPU‐based acceleration of computations in nonlinear finite element deformation analysis." International journal for numerical methods in biomedical engineering (2013).

Upvotes: 18

Reputation: 4422

The criteria for coalescing are nicely documented in the CUDA 3.2 Programming Guide, Section G.3.2. The short version is as follows: threads in the warp must be accessing the memory in sequence, and the words being accessed should >=32 bits. Additionally, the base address being accessed by the warp should be 64-, 128-, or 256-byte aligned for 32-, 64- and 128-bit accesses, respectively.

Tesla2 and Fermi hardware does an okay job of coalescing 8- and 16-bit accesses, but they are best avoided if you want peak bandwidth.

Note that despite improvements in Tesla2 and Fermi hardware, coalescing is BY NO MEANS obsolete. Even on Tesla2 or Fermi class hardware, failing to coalesce global memory transactions can result in a 2x performance hit. (On Fermi class hardware, this seems to be true only when ECC is enabled. Contiguous-but-uncoalesced memory transactions take about a 20% hit on Fermi.)

Upvotes: 4

Reputation: 139

If the threads in a block are accessing consecutive global memory locations, then all the accesses are combined into a single request(or coalesced) by the hardware. In the matrix example, matrix elements in row are arranged linearly, followed by the next row, and so on. For e.g 2x2 matrix and 2 threads in a block, memory locations are arranged as:

(0,0) (0,1) (1,0) (1,1)

In row access, thread1 accesses (0,0) and (1,0) which cannot be coalesced. In column access, thread1 accesses (0,0) and (0,1) which can be coalesced because they are adjacent.

Upvotes: 12

Reputation: 8365

It's likely that this information applies only to compute capabality 1.x, or cuda 2.0. More recent architectures and cuda 3.0 have more sophisticated global memory access and in fact "coalesced global loads" are not even profiled for these chips.

Also, this logic can be applied to shared memory to avoid bank conflicts.

A coalesced memory transaction is one in which all of the threads in a half-warp access global memory at the same time. This is oversimple, but the correct way to do it is just have consecutive threads access consecutive memory addresses.

So, if threads 0, 1, 2, and 3 read global memory 0x0, 0x4, 0x8, and 0xc, it should be a coalesced read.

In a matrix example, keep in mind that you want your matrix to reside linearly in memory. You can do this however you want, and your memory access should reflect how your matrix is laid out. So, the 3x4 matrix below

0 1 2 3

4 5 6 7

8 9 a b

could be done row after row, like this, so that (r,c) maps to memory (r*4 + c)

0 1 2 3 4 5 6 7 8 9 a b

Suppose you need to access element once, and say you have four threads. Which threads will be used for which element? Probably either

thread 0: 0, 1, 2

thread 1: 3, 4, 5

thread 2: 6, 7, 8

thread 3: 9, a, b

or

thread 0: 0, 4, 8

thread 1: 1, 5, 9

thread 2: 2, 6, a

thread 3: 3, 7, b

Which is better? Which will result in coalesced reads, and which will not?

Either way, each thread makes three accesses. Let's look at the first access and see if the threads access memory consecutively. In the first option, the first access is 0, 3, 6, 9. Not consecutive, not coalesced. The second option, it's 0, 1, 2, 3. Consecutive! Coalesced! Yay!

The best way is probably to write your kernel and then profile it to see if you have non-coalesced global loads and stores.

Upvotes: 205

Related Questions

- CUDA - Coalescing memory accesses and bus width

- CUDA coalescing and global memory

- Is coalescing triggered for accessing memory in reverse order?

- From non coalesced access to coalesced memory access CUDA

- Is incomplete global memory access coalesced?

- Memory coalescing and transaction

- CUDA: Why accessing the same device array is not coalesced?

- CUDA: When can someone achieve coalescing memory?

- Cuda coalesced memory load behavior

- cuda memory coalescing